Cyberattacks have never been more successful than in the last year, and for 2020 an alarming increase of cybercrimes is expected, factoring in the surge of critical IT assaults that arose with the spread of COVID-19. And in a world where the enormous capabilities of artificial intelligence (AI) and machine learning (ML) are continuously growing, the potential for attackers to exploit them cannot be ignored.

In 2019, four out of five organizations were victim to at least one successful attack to their IT security, according to CyberEdge’s annual Cyberthreat Defense Report (CDR). And with the number of corporate endpoints becoming more and more with the increased need for remote working, the traditional cybersecurity strategy based on the detection of malware starting from what is external to the company perimeter is not feasible anymore. That’s why IT professionals are most concerned about the security of IT components that are relatively new such as containers, or those that are infrequently connected to the corporate network and harder to monitor, such as tablets, mobile phones and other IoT devices.

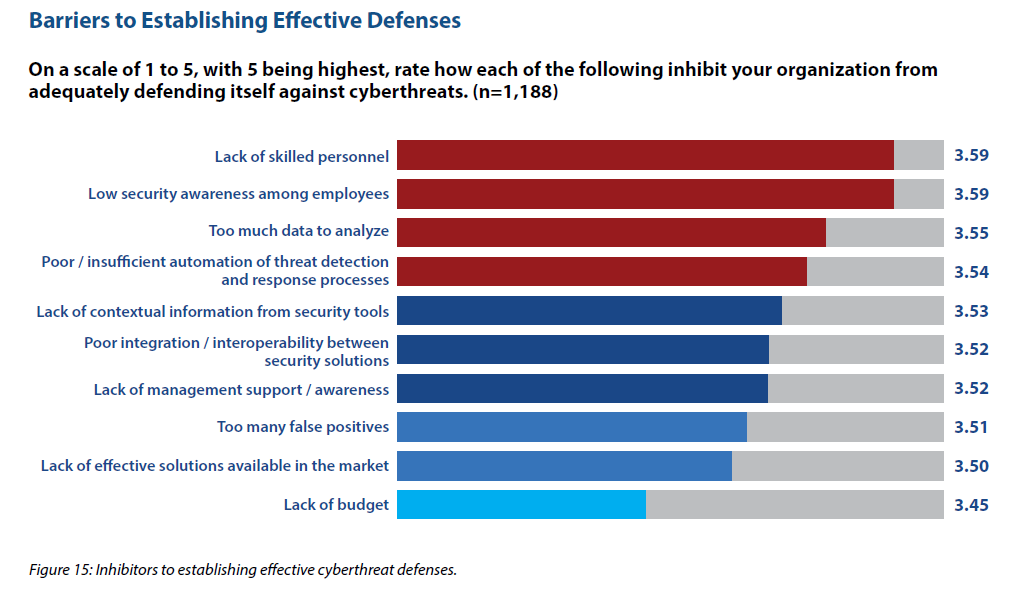

Figure 1- Inhibitors to establishing effective cyberthreat defenses. Source: http://cyber-edge.com/cdr/

The CDR report also identifies “Too much data to analyze” and “Poor/insufficient automation of threat detection and response processes” among the barriers to establish effective IT defenses. And crucially, these are the type of problems that can be solved by artificial intelligence. By integrating and analyzing disjointed cyber data, it is possible to extract evidence-based insights regarding an organization’s unique threat landscape, enabling defenders to understand who the adversaries are, what could be the consequences of an attack, what assets could be compromised, and how to detect or respond to a threat.

AI and ML can be thought of as the sentinels guarding over IT assets and devices 24/7, and without any loss of attention. Machine learning, as the AI discipline that aims at making a computer to learn automatically through experiences, can lessen the burden of a heavy cybersecurity workload and reduce human error and oversights. ML techniques are highly customizable for the specific requirements of a company and can help reduce the workload for the security team. However, incorporating AI and ML into your cybersecurity strategy isn’t as simple as you may think.

Looking at the positives, AI systems allow data to be automatically processed and analyzed, and ML contributes to defining the correlations between events and enables their monitoring to identify indicators of compromise. So AI algorithms, such as natural language processing (NLP) and image recognition, can enable us to detect threats and frauds in languages we don’t understand, and in images and videos we would never normally have time to view and interpret. Overall, AI can then be seen to positively augment human capabilities by processing more data in shorter time frames, and by learning patterns associated with data breaches and other negative outcomes including correlations and anomalies that no human would ever think.

On the other hand, cybercriminals are also using AI. They are tweaking their malware code so that security software no longer recognizes it as malicious. According to the Nokia Threat Intelligence Report 2019, with AI-powered botnets cybercriminals are finding specific vulnerabilities in Android devices and then exploiting those vulnerabilities by loading data-stealing malware that is usually only detected after the damage has been done. With DeepFake attacks they’re mimicking the voice and appearance of individuals in audio and video files, with devastating consequences.

Realistically then, ML, and by extension AI, will never be the silver bullet for cybersecurity as they are for image recognition. That’s because there will always be a man trying to find weaknesses in systems or in ML algorithms to bypass security mechanisms. The fight will be tough, because AI is serving impartially both the attackers and the defenders.

As in a gigantic and continuous Game of Thrones, hackers will develop even more sophisticated, automated techniques, capable of analyzing software and organizations to identify the most vulnerable entry points and exploit them, possibly predicting the defensive capabilities. Cyber analysts and IT experts can defeat bad actors only by understanding how AI will be weaponized and thus being able to confront cybercriminals head-on.

While the war is far from over, Machine Learning can definitely help improve cybersecurity protection – here’s our set of guiding principles every company can utilize to make their IT more secure.

1. Due to the multivariate landscape of organizations in need of high standards of security, it is not possible to identify a one-size-fits-all solution for threat intelligence. When deciding on whether to use ML in a cybersecurity project, the most appropriate question to ask about a tool, is if it is a good fit for its intended purpose. That’s the first question identified at Carnegie Mellon University in their guide for applying ML to cybersecurity. Any decision maker should read this guide before employing ML or AI solutions in the area of cybersecurity.

2. In the same way that no modern computer system can be absolutely assured, there is no fail-safe way to protect an AI or ML system. ML itself introduces a new set of vulnerabilities, when used in the real-world, which makes it susceptible to adversarial activity. Data scientists need to be aware of ML limitations in real-world environments and the unique requirements of the cybersecurity industry. Therefore an adequate risk analysis should be taken into account, to reduce the risk and the impact of attacks to acceptable levels. This is one of the big challenges for cyber threat intelligence.

3. ML models will only ever be as good as the quality and quantity of the training datasets. So, the immense volume of cyber data triggers a wide set of difficulties for data analysts:

This set of difficulties is related with the feature engineering process that is usually guided by domain knowledge. In such a complex context, deep learning techniques, where neural networks are used to train the model and learn the common patterns, usually outperform traditional learning approaches, and they achieve greater flexibility because domain experts and data analysts do not need to continuously tweak the system. However, as deep learning tools are considered ‘black boxes’ in which processes and conclusions are not understandable by humans, other ML methods should be taken into consideration when setting up a cybersecurity strategy.

At a personal level, we see that individuals are starting to realize that security is also and primarily their business and that they’re part of a system and a process themselves. So we recognize a change of perspective and an important evolution towards more safe digital behaviors. From the businesses perspective, in the end, if decision makers are aware of the critical issues, if these innovative AI-based tools are applied correctly together with human security teams, AI will keep complementing human intelligence and side by side they will help organizations stay secure against increasingly smart and potent cyberattacks.

Overall, AI-based cybersecurity tools are continuing to develop and improve, and research into defending ML tools is ongoing. At Konica Minolta we’re investing in Research & Development applied to cybersecurity as an essential component of our next-generation IT services that are aimed at SMBs which are seriously affected by security problems. Find out more at research.konicaminolta.com.

All Covered offers a full suite of cybersecurity services, covering all infrastructure components including computers, mobile devices, servers, and firewalls and further enhanced with cloud backup and disaster recovery which ensures successful threat mitigation while greatly reducing the potential of data loss. Explore more online.